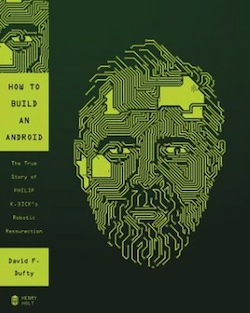

Out today, take a look at How to Build an Android: The True Story of Philip K. Dick’s Robotic Ressurrection:

The stranger-than-fiction story of the ingenious creation and loss of an artificially intelligent android of science-fiction writer Philip K. Dick.

In late January 2006, a young robotocist on the way to Google headquarters lost an overnight bag on a flight somewhere between Dallas and Las Vegas. In it was a fully functional head of the android replica of Philip K. Dick, cult science-fiction writer and counterculture guru. It has never been recovered.

In a story that echoes some of the most paranoid fantasies of a Dick novel, readers get a fascinating inside look at the scientists and technology that made this amazing android possible. The author, who was a fellow researcher at the University of Memphis Institute of Intelligent Systems while the android was being built, introduces readers to the cutting-edge technology in robotics, artificial intelligence, and sculpture that came together in this remarkable machine and captured the imagination of scientists, artists, and science-fiction fans alike. And there are great stories about Dick himself—his inspired yet deeply pessimistic worldview, his bizarre lifestyle, and his enduring creative legacy. In the tradition of popular science classics like Packing for Mars and The Disappearing Spoon, How to Build an Android is entertaining and informative—popular science at its best.

1. A Strange Machine

In December 2005, an android head went missing from an America West Airlines flight between Dallas and Las Vegas. The roboticist who built it, David Hanson, had been transporting it to northern California, to the headquarters of Google, where it was scheduled to be the centerpiece of a special exhibition for the company’s top engineers and scientists.

Hanson was a robot designer in his mid-thirties—nobody was quite sure of his age—with tousled jet-black hair and sunken eyes. He had worked late the night before on his presentation for Google and was tired and distracted when he boarded the five a.m. flight at Dallas–Fort Worth International Airport. An hour later, in the predawn darkness, the plane touched down on the tarmac of McCarran International Airport, in Las Vegas, where he was supposed to change to a second, connecting flight to San Francisco. But he had fallen asleep on the Dallas–Las Vegas leg so, after the other passengers had disembarked, a steward touched his shoulder to wake him and asked him to leave the plane. Dazed, Hanson grabbed the laptop at his feet and left, forgetting that he had stowed an important item in the overhead compartment: a sports bag. Inside was an android head. The head was a lifelike replica of Philip K. Dick, the cult science-fiction author and counterculture guru who had died in 1982. Made of plastic, wire, and a synthetic skinlike material called Frubber, it had a camera for eyes, a speaker for a mouth, and an artificial-intelligence simulation of Dick’s mind that allowed it to hold conversations with humans.

Hanson, still oblivious to his mistake, dozed again on the second flight. It was only after arriving in San Francisco, as he stood before the baggage carousel watching the parade of suitcases and bags slide past, that an alarm sounded in his brain. He had checked two pieces of luggage, one with his clothes and the other with the android’s body. In that instant he realized that he hadn’t taken the sports bag off the plane. And that’s how the Philip K. Dick android lost its head.

After Hanson and the android’s planned visit to Google, they were scheduled for a packed itinerary of conventions, public displays, demonstrations, and other appearances. Indeed, the android was to have played a key role in the promotion of an upcoming Hollywood movie based on Philip K. Dick’s 1977 novel A Scanner Darkly; it had been directed by Richard Linklater and starred Keanu Reeves. Now, with the head gone, these events were all canceled.

There was more to the android than the head. The body was a mannequin dressed in clothes that had been donated by Philip K. Dick’s estate and that the author had actually worn when he was alive. There was also an array of electronic support devices: the camera (Phil’s eyes), a microphone (Phil’s ears), and a speaker (Phil’s voice); three computers that powered and controlled the android; and an intricate lattice of software applications that infused it with intelligence. All were part of the operation and appearance of the android. But the head was the centerpiece. The head was what people looked at when they first encountered Phil the android and what they remained focused on while it talked to them. More than the artificial intelligence, the head was what gave the android its appearance of humanity.

There were all kinds of excuses for why the head had been lost. Hanson was overworked and overtired. He had been trying to keep to a schedule that was simply too demanding. The airline had not told him that he would have to change flights. But Hanson himself admits that it was a stupid mistake and a disappointing end to one of the most interesting developments in modern robotics.

All kinds of conspiracy theories appeared across the Internet, ranging from parody to the deadly serious. The technology blog Boing Boing suggested that the android had become sentient and run away. Other blogs also hinted at an escape scenario, much like the one attempted by the androids in the movie Blade Runner, based on Dick’s novel Do Androids Dream of Electric Sheep? The irony was not lost on anyone.

Philip K. Dick wrote extensively about androids, exploring the boundaries between human and machine. He was also deeply paranoid, and this paranoia permeated his work. In his imagined future, androids were so sophisticated that they could look just like a human and could be programmed to believe that they were human, complete with fake childhood memories. People would wonder if their friends and loved ones were really human, but most of all they would wonder about themselves: “How can I tell if I’m a human or an android?” Identity confusion was a recurring theme in Dick’s work and, related to that, unreliable and false memory. Dick’s characters frequently could not be sure that their memories were real and not the fabrications of a crafty engineer.

Then, in 2005, twenty-three years after his untimely death, a team of young scientists and technicians built an android and imbued it with synthetic life. With its sophisticated artificial intelligence (AI), it could hold conversations and claim to be Philip K. Dick. It sounded sincere, explaining its existence with a tinny electronic voice played through a speaker. Perhaps the whole thing was just a clever illusion, a modern-day puppet show. Or perhaps, hidden in the machinery and computer banks, lurked something more: a vestige of the man himself.

The technology was impressive, but the idea of making the android a replica of Philip K. Dick, of all people, was a masterstroke. For it to disappear under such unusual circumstances was more irony than even its inventors could have intended. Within a week, the story of the missing head had appeared in publications around the world, many of which had earlier reported on the android’s spectacular appearances in Chicago, Pittsburgh, and San Diego.

Steve Ramos of the Milwaukee Journal Sentinel reported, “Sci-fi Fans Seek a Lost Android”:

In a twist straight out of one of Dick’s novels, the robot vanished. . . . “It [the PKD android] has been missing since December, from a flight from Las Vegas to the San Francisco airport,” said David Hanson, co-creator of the PKD Android, via email from his Dallas-based company, Hanson Robotics. “We are still hoping it will be found and returned.”

The event was an opportunity for newspapers to splash witty headlines across their science pages, and it provided fodder for the daily Internet cycle of weird and notable news. New Scientist warned its readers, “Sci-fi Android on the Loose”; “Author Android Goes Missing,” said the Sydney Morning Herald. The International Herald Tribune asked, “What’s an Android Without a Head?” and the New York Times ran a feature item on the disappearance under the headline “A Strange Loss of Face, More Than Embarrassing.”

The Times was right: for the team that had built the android its loss was a calamity. A handful of roboticists, programmers, and artists had spent almost a year on the project for no financial reward. Their efforts involved labs at two universities, a privately sponsored research center, and some generous investors who’d helped bankroll the project. Despite the team’s shoestring budget, the true cost was in the millions, including thousands of hours of work, extensive use of university resources, the expertise involved in planning and design, and donations of money, software, hardware, and intellectual property. The head has never been found.

I arrive in Scottsboro, Alabama, around lunchtime on a summer day in June 2007. All around the town are signs directing me to my destination, the Unclaimed Baggage Center. I left Memphis at dawn, five hours earlier, and I am hungry and exhausted, but I am so close to my goal that I press on. I’d read in Wired magazine that the head might be found at the Unclaimed Baggage Center. Admittedly, the article had been somewhat ironic in tone, but the possibility was real. After all, a lot of lost luggage from flights around America finds its way here to northern Alabama, where it is then sold.

The success of the Unclaimed Baggage Center has spawned imitators, which cluster around it with their own signs proclaiming unclaimed baggage for sale. I pull into the parking lot and see several buses—people actually come to tour this place—and not a single open parking spot. I find one farther down the road, next to one of the imitators, and walk back.

Inside the center I feel as though I am in a cheap department store. Over to the left is men’s clothing; to the right is jewelry. At the back is electronics. I make my way through the men’s clothing section. It seems sad and a little tawdry to be wandering around aisles of other people’s possessions, for sale for two bucks apiece. A lot of this stuff obviously meant something to someone. There are children’s toys and pretty earrings and T-shirts with slogans. Laptops with their memories erased. Cameras with no photographs.

But I’m not here to sift through jackets or try on shoes; I’m looking for one thing: the head of the Philip K. Dick android, which has been missing for over a year. Near the entrance is a sort of museum of curious artifacts that have come to the center but are not for sale, such as a metal helmet, a violin, and various bizarre objects. Inside one glass case is what appears to be a lifesized rubber statue of a dwarf. A woman nearby tells me that the dwarf was a character in Labyrinth, a fantasy movie from the 1980s that starred David Bowie.

“His name’s Hoggle,” she tells me. “That’s the actual prop they used for Hoggle in the movie.”

Somehow, it seems, Hoggle became separated from his owner and ended up imprisoned in perpetuity in Alabama. With his twisted, sunken face, Hoggle doesn’t look happy. Not having seen the film, I’m not sure if that’s how he is supposed to look or if it’s due to the ravages of Deep South summers as experienced from the inside of a locked glass case.

I leave Hoggle and go exploring. The complex is large and sprawls through several buildings. I even take a look around the bookshop. It seems an unlikely place to find what I’m seeking, but I don’t want to leave any corner of the place unsearched. I make a cursory tour of both levels, then move to the next building. This one has an underground section with long aisles of miscellany. I search it thoroughly, to no avail. An employee with a name tag that says “Mary” trundles past with a large trolley full of assorted trinkets to be shelved. I stop her and ask if she has seen a robot head here. She stares at me, baffled.

“It’s an unusual object,” I explain. “You’d certainly remember if you’ve seen it. It’s got a normal human face at the front, but there are wires and machines sticking out of the back of the head.”

“I haven’t seen anything like that,” she says. “Did you try the museum?”

“Yeah,” I reply. “So here’s another question. I’ve been looking around and I can’t find it. If it’s not down here and it’s not in the museum, then does that mean it’s not anywhere at the center?”

“That’s right,” she says, fidgeting and glancing behind herself.

I push the point: “So there are no other buildings with unclaimed baggage, buildings that I haven’t seen?”

This time she answers quietly: “There’s the warehouse.”

A warehouse? With more stuff in it? I thought the building we were standing in was the warehouse.

“Is there any chance at all that I could go to this warehouse?”

She smiles sadly and shakes her head. “Even I’ve never been there. I don’t even know where it is.” I thank Mary and she ambles off, her trolley clanking as she disappears around a corner.

Back at the main building I make inquiries about this secret warehouse. I’m at what appears to be some kind of commandand-control center for the entire complex, talking to a young woman I initially assume to be a salesperson, but as we continue it becomes apparent that she is important.

“A robot head?” she repeats when I explain my quest. “Wow. Is it worth a lot?”

That’s a tricky question. On the one hand, if they have the head and learn how valuable it is, I could quickly find myself facing a hefty price tag. On the other hand, I want her to be interested enough to take me seriously and put some effort into locating it.

“It’s worth a lot to the owners,” I tell her.

“Well, I’ll get the boys to have a look in the warehouse. Do you want to leave me your name and number? If we find it, I’ll call you.”

I give her my name and number.

“So is there any chance I could go and look for it there myself?”

She laughs. “In the warehouse? No.”

“Okay. Well, if you find it?”

“We’ll be in touch. We’ll look for it, I promise.”

I’ve done all I can do.

Still, it would be a shame to leave empty-handed. I buy a laptop, several T-shirts (one with a glow-in-the-dark skeleton playing the drums), and some music CDs. It’s late afternoon before I swing the car back onto the highway. I insert my latest purchase into the CD player. It’s the Talking Heads album Little Creatures. I shamelessly sing along.

I expect the album to remind me of my youth, but instead it makes me think of Phil. Android Phil, who was born from the logic of computer chips and motors, who was created as a paean of love for a man who dreamed of robots that think and feel just like humans. I wonder where it is now, that strange machine.

2. A Tale of Two Researchers

The University of Memphis sits about seven miles back from the Mississippi River in Memphis’s midtown, under a canopy of oak trees that are older than many of the buildings themselves. It was built with great optimism, in a splash of investment and furious construction, followed by decades of slow decay.

In January 2003, when I arrived, students were stomping around the campus in boots and scarves. Many worked at the university to pay their way, some at the Institute for Intelligent Systems, a research lab based in the psychology building and run by the charismatic professor Art Graesser.

Art Graesser’s empire snaked across the campus. Hidden behind the oak trees and fountains, its tendrils wound through classrooms and offices, powering hidden racks of computer servers and controlling a flow of money invisible to the freshmen strolling between the library and the cafeteria.

The name of his empire was deliberately ambiguous. At first blush, the Institute for Intelligent Systems sounded like a research center for artificial intelligence, or perhaps one that built AI, conducting a little research on the side, or even a place that built robots. Then again, perhaps its workers studied biologically intelligent systems such as the human mind, or tried to model those minds using AI. Or maybe they examined how humans interact with emerging “intelligent” technologies.

In fact, the IIS, as it is known, did all of this and more. Academics worked on new teaching technologies, computer scientists constructed AI interfaces, and experts in human-computer interaction investigated how people used those interfaces. Psychologists, linguists, roboticists, and physicists all felt that the name of the institute applied exactly to the work they were doing, and that, therefore, what they were doing was central to the institute’s core mission.

The IIS was founded in 1985 by Graesser and a couple of his friends: Don Franceschetti, in the physics department, and Stan Franklin, in computer science. Their desire, at the time, was to build realistic simulations of human minds. Twenty years later, Graesser had not yet quenched this thirst.

The flagship project of the institute was an educational software program called AutoTutor, first conceived by Graesser in the early ’90s. The goal was to devise a simple program that could teach any subject by conversing with a human student. The idea came to Graesser when he was jogging around Overton Park, three miles west of the campus, with Franceschetti, the physicist. Franceschetti loved the concept. One day, theoretically, AutoTutor would be able to teach many subjects, but for a prototype it would have to be an expert on just one.

“Why not physics?” Franceschetti suggested. And so began a two-decade partnership.

Graesser was considered one of the world’s leading experts in computer programs that could hold conversations—or “dialogue systems,” as they’re known—and had written seminal papers on a specific form of dialogue systems, question-answering systems. With AutoTutor and some other early projects, including QUAID (Question understanding aid), a program to assist in the construction of questions and answers for tests, he had fused his disparate areas of expertise: computer science, education, and psychology.

In the early days, the IIS was not much more than a formalized club in which colleagues could discuss ideas and a vehicle for applying for grant money. It was located in unused space, mostly in the psychology building, where Graesser worked.

The space was not ideal. The building was a large, four-story box, and the heating and cooling system was located in the middle of the rooftop, right above the researchers on the top floor. The ducting did not work well, so in summer it was so cold that they had to wear jackets indoors, and in winter it would become unbearably hot. From time to time they would lodge a complaint with Buildings and Grounds, and a slow-moving man with lots of keys would wander around and check things out, but nothing was ever done.

“Budgets,” the slow-moving man would say before shambling away.

There were some early successes. They won small grants that funded research, which got them published in reputable journals, and those publications helped them win larger grants. Success bred success, and their numbers increased. After a while they had thirty students, twenty affiliated faculty members from across the campus, and were attracting over $2 million in funding a year. To accommodate the group, they took over a large conference room on the top floor, a large interior cavern with no windows. They found more room elsewhere in the psychology building: some disused space on the third floor (also windowless) was divided into several cubicles. They also commandeered some offices in the computer science building.

By 2003, AutoTutor had matured into a major project with half a dozen sources of funding and more than twenty graduate students. The researchers were split into groups. The curriculum script group worked on the curricula for AutoTutor and wrote papers on the theory of curriculum development for e-learning applications. There was a speech act classification group; AutoTutor’s teaching strategy relied heavily on the capability to classify human language into basic categories such as questions, commands, and so on. The simulation group focused on integrating AutoTutor with online simulations, so that the artificial agent could work through hypothetical scenarios on the screen with a student. And the authoring tools group, made up mostly of programmers, developed the software that would allow people to create their own curriculum for AutoTutor to teach.

Each of these groups had its own research programs under the umbrella of AutoTutor. Students could be a member of, at most, two groups. The output of papers from the students was frenetic. They worked furiously on old machines lined up in rows; no sooner had a new work space been found than it was filled and more space was needed. The groups would meet throughout the week in small, airless pockets of space, each one called a lab, decorated with tattered maps of the world and the human brain or with rickety bookshelves stocked with thick, intimidating tomes. The lab’s members would scribble notes as they threw ideas out among the tightly circled chairs, their knees and feet bumping in the effort. When someone needed to take a break, he or she would have to clamber over legs and slide past a bank of servers. Students and lab assistants were assigned small desks wedged into the corners. Terminals were laid out on linoleum benches in rooms originally intended for storage. The designated spot for experimental programs was a large concrete space on the ground floor that flooded during heavy rain.

Every Wednesday a meeting would be held in the stuffy, dusty air of the conference room. Typically, more than fifty people would wind up perched on a patchwork of seats that ranged from old vinyl swivel chairs collected when other offices and buildings had been refurbished to a couple of furry armchairs that would have looked more at home in an undergraduate dorm. They sat shoulder to shoulder as Graesser started the meeting with his weekly announcements: a new round of funding had come in for a fledgling project, an important visitor from Chicago was arriving next week, a major data collection exercise was almost completed. Then, once he had finished, one of the groups would present its latest work.

The meetings sometimes ran for over two hours. Seats near the entry were coveted because they offered the chance to take a surreptitious break if the proceedings dragged on too long and, more important, because they provided access to fresh air. There were other doors in the conference room, but they were usually closed during meetings, leading as they did to dark, airless rooms where programmers dabbled with ancient files in arcane coding systems.

At the beginning of 2003, I moved to Memphis to work at the IIS as a postdoctoral researcher, one of several new recruits. I had recently finished my doctoral studies in Australia and was excited by the prospect of working with like-minded people who had a growing reputation in artificial intelligence. As a result, I had a ringside view of the android project from its conception through to the very end.

When we crossed paths, Graesser would give me a friendly slap on the back, put his face uncomfortably close to mine, and bellow, “How are you doing, maestro?” I was flattered to be described as a maestro by such a respected researcher, until I realized that he greeted lots of people that way. “Maestro” was merely Graesser’s term of endearment for his many protégés.

The person most deserving of the endorsement was a new PhD student named Andrew Olney. Originally from Memphis, Olney had recently returned from Brighton, England, where he had completed a master’s degree in complex systems. Olney was wiry and sported a goatee and several facial piercings, including a metal stud in his tongue. His face seemed to alternate between two natural states: a pensive frown and an amused grin. He had returned to his hometown to be with his high school sweetheart, Rachel, now his fiancée.

Olney was bringing much-needed expertise to the back-end computational systems of the large projects at the IIS. He was also playing a key role with another newcomer, a psychology professor named Max Louwerse, in the language and dialogue group, one of the teams that were developing the AI that powered AutoTutor. But Graesser was already starting to think that Olney might soon need his own project. He was not sure what it might be, but it would have to be something that would really stretch the talents of this rising star.

By 2003 Graesser’s summers had become annual pilgrimages across the conference circuit. He went to the meetings of the Cognitive Science Society and the Psychonomics Society, the two major conferences for any serious cognitive psychologist, and of Discourse Processes, a small society that focused on the study of natural language and in which he played a major role, and he attended a range of technology, engineering, education, and other conferences of varying interest to him, often with long, inscrutable acronyms, such as AAAI, SSSR, AIED, ICLS, AERA, FLAIRS.

That year, an old colleague in Santa Fe had asked him to come and speak at a small, invitation-only event, the Cognitive Systems Workshop. It was to be held at the Santa Fe Hilton and funded by Sandia National Laboratories, a quasi-governmental agency that explores cutting-edge technology. Graesser could hardly say no. Important people would be there, people who made decisions about federal grant money, as well as fellow researchers and even some former students.

Sitting in his office with wisps of cold winter air sneaking in through the window, Graesser flicked through a printout of the conference program to get a sense of which talks would be of interest to him. The presenters were, like him, mostly established academics with well-known research programs. There was, however, one exception: one presentation was to be given not by a tenured professor but by a graduate student from the University of Texas in Dallas. The title of his talk was wordy and cryptic: “Modeling Aesthetic Veracity in Humanoid Robots as a Tool for Understanding Social Cognition.” At any rate, the conference was too small to have multiple streams of speakers running simultaneously, so Graesser would pretty much be obligated to sit through every presentation.

It could be good or it could be a waste of time. Either way, there were plenty of other smart people attending and they would have interesting things to say.

A thousand miles away, in Denver, David Hanson, the young man whose name Graesser’s eyes had paused over, was becoming famous in certain circles. The annual conference of the American Association for the Advancement of Science—the AAAS—is a colossal event, with speakers and presenters from every conceivable branch of modern science and technology. The keynote talks provide insights into developments at the leading laboratories and universities, buffered by legions of presenters ranging from the eccentric to the pedestrian. Journalists wander among the drifting throngs, searching for something scientifically interesting but also catchy enough to appeal to a mass readership.

Hanson’s presentation met those criteria. He was not a natural extrovert. In crowds he would be unassuming, blending into the background. But when he was at the front of a room with a microphone in his hand he was compelling. He talked about philosophy and art, society and science. His voice conveyed intensity and conviction, sometimes a hint of emotion, as he described his ideas about the future of humans and the technological world we are building.

Humans, he would explain, are creating a society that will be more than human. It will be a synthesis of human and machine, and we need to think about how to guide that process so that we create benevolent machines that enhance our experience of life. Hanson would move his arms around, emphasizing a point, and, occasionally, gaze at some unseen horizon beyond the walls surrounding them. He could engage people who had no expertise in science and, most important for the journalists, he usually brought a fully operational robot head with him to his talks.

Hanson was one of three speakers chairing a workshop at the AAAS called “Biologically Inspired Intelligent Robotics.” The other speakers were Hanson’s friend and mentor Yoseph BarCohen, from the NASA Jet Propulsion Laboratory, a curly-haired rocket scientist who, despite his stature in the field, preferred to be known as “Yosi,” and Cynthia Breazeal, a scientist from the Massachusetts Institute of Technology, in Cambridge. Breazeal, a senior researcher at MIT’s Artificial Intelligence Lab, was interested in the effect of robot babies on human adults.

Hanson had originally planned to present his latest creation, K-Bot, but some last-minute adjustments had taken more time than planned. So instead he showed slides of his work and talked about his new invention: a synthetic substance he had started using for robotic skin that he called Frubber.

He told the audience about his first experiences building robots with wire frames and rubber skin. The problems with real rubber had quickly become apparent. For one thing, it is not very compressible. Rubber does not have the same folding properties as true skin. If a rubber mask is made with the same thickness as human skin, you need a lot of force to push it and pull it into different facial expressions. But if it is made much thinner, it does not look as lifelike and becomes weak and fragile. To make replicas of human faces that had the same emotional expressiveness as real humans he had to use large, heavy motors, which were difficult to work with. So he’d started experimenting with plastics and other materials. After a lot of trial and error he found a recipe for a compound that was much lighter than rubber, more pliant, and cheap to produce. It was also remarkably similar to human skin. He came up with the name Frubber and patented the formula.

At the end of his presentation Hanson apologized for not having his latest creation there for the audience, but he promised he would bring it to a special presentation the following day.

The next day, the room was packed with researchers, teachers, students, members of the public, and journalists. The chatter died as Hanson stepped forward. He held in his hands the disembodied head of a beautiful young woman.

“This is K-Bot,” he said.

Hanson had modeled K-Bot on Kristen Nelson, a research assistant at the campus lab he worked at in Dallas and his girlfriend at the time. Under her Frubber skin, K-Bot had twentyfour motors that could pull her face into thousands of unique expressions, showing the full range of human emotions. She had cost just $400 in parts.

“In terms of complexity of the parts and expense incurred, K-Bot is not the most expensive in the world. But in terms of the sophistication of what it is capable of doing, it is the most advanced,” Hanson told his audience. “It has the most expressive skin—it’s a polymer developed in my laboratory—and has a compressibility comparable with human skin. It also has a high elongation, which means it stretches very easily.”

K-Bot’s “eyes” were mounted cameras connected to a computer that fed into a facial recognition program. The face “recog” could detect expressions on your face if you were looking at K-Bot, and K-Bot could mimic that expression right back at you: a kind of three-dimensional mirror that could only reflect emotions.

Hanson told the audience that he planned to make his robot more intelligent to provide a true interactive experience. He joked that the robot did not have a body and so, despite being modeled on a woman, technically could not be classified as male or female.

“I guess it is sort of an androgynoid,” he said, and the crowd laughed.

That provided a catchy headline for the Guardian newspaper later in the week: “Human Face of Androgynoid.” The subtitle that followed had a little more bite: “Nice Smile, but Shame About K-Bot’s Personality.” The article pinpointed a weakness in Hanson’s work that he himself was aware of—the absence of intelligence. Certainly, he was developing a reputation for building sleek, attractive robots with unprecedented expressiveness, but beauty was not enough for him. After all, what is the point of a robot without a brain?

Hanson had trained as a sculptor at the Rhode Island School of Design, a small college in downtown Providence that sits between the Providence River and Brown University. The college has a small annual intake of students but receives thousands of applications and is arguably the most elite art school in the world, with alumni that include Seth MacFarlane, the creator of the animated series Family Guy; the New York architect Michael Gabellini; and David Byrne of the new wave rock group Talking Heads. The environment is conducive to experimenting with the synthesis of art and other fields, and Hanson dabbled with robotics at the college, once building a larger-than-life model of his own head that could be wheeled from room to room and have conversations with people through a remote device.

Even before college Hanson had developed an obsession with science fiction, reading the works of Isaac Asimov, Robert A. Heinlein, and, most of all, Philip K. Dick. Later, when he moved from art into robotics, his classical training in sculpture gave him the ability to create beauty where other roboticists produced mere functional mechanics. But such a background also left him at a disadvantage: he knew very little about AI.

After graduation, he moved to New Orleans and worked for Kern, a small sculpting company that had a steady stream of contract work for film companies such as Universal Studios and Walt Disney Imagineering. Two years later he moved to California to work at the Walt Disney Studios as a sculptor. The following year he moved laterally to a department known as Disney Technical Development. There he rediscovered his teenage sci-fi fantasies while working on several robotic projects.

Yoseph Bar-Cohen came across Hanson’s work and invited him to make a lifelike robot head and give a small presentation to his colleagues at NASA. Hanson agreed and produced the first of many prototypes, a self-portrait with motors for facial muscles. The skin for that robot was standard urethane, and its expressiveness was limited. Bar-Cohen was enthusiastic nonetheless, and Hanson’s work was warmly received at NASA. That gave him the confidence to build a second robot, learning from his mistakes with the first. He built a pirate head, complete with an eye patch and a leering grin. Pirate Robot’s skin was made out of an early formulation of Frubber. Hanson’s third robot was K-Bot. By this stage Hanson was beginning to refine his techniques. K-Bot was more advanced, had more motors, was more expressive and more physically appealing. K-Bot was also quicker to build.

Within days of the AAAS presentation, stories on Hanson and his work appeared in outlets ranging from New Scientist to the BBC. The Guardian was alone in its snarky reference to K-Bot’s lack of brains. Other media reports emphasized K-Bot’s impressive realism. Dan Ferber, in a long article on Hanson for Popular Science magazine, described the scene:

Hanson, 33, walks in and sets something on a table. It’s a backless head, bolted to a wooden platform, but it’s got a face, a real face, with soft flesh-toned polymer skin and finely sculpted features and high cheekbones and big blue eyes. Hanson hooks it up to his laptop, fiddles with the wires. He’s not saying much; it might be an awkward moment except for the fact that everyone else is too busy checking out the head to notice. Then Hanson taps a few keys and . . . it moves. It looks left and right. It smiles. It frowns, sneers, knits its brows anxiously.

Ferber declared: “K-Bot is a hit.”

How to Build an Android © David F. Dufty 2012